When Platforms Harm: The Legal Battle Over Social Media Addiction

By Jared · Mar 14, 2026

From Content to Architecture: Redefining Platform Accountability

Among the many things for which social media platforms are criticised by regulators -disinformation, hate speech, harassment and extremist content - is the potential impact of these platforms on developing young users. Increasingly, this debate stretches beyond the familiar disputes over online speech and into the architecture of the platforms themselves. Critics argue that social media services such as Instagram are deliberately engineered to maximise user engagement through features designed to capture and retain attention. When those users are children or adolescents, the consequences of such design choices may be particularly acute. The question therefore arises: can platforms be held legally liable for the harms allegedly caused by systems designed to be addictive?

Kaley’s Case: A Bellwether for Platform Accountability

A trial currently unfolding in Los Angeles may prove to be one of the most consequential attempts yet to hold social media companies legally accountable for the effects of their platforms on young users.

The claimant, a now 20-year-old woman known publicly only as “Kaley” to protect her privacy, alleges that her childhood mental health struggles were exacerbated - and in part caused - by prolonged use of social media platforms operated by Meta Platforms and Google. Her claim centres on the argument that features built into services such as Instagram and YouTube are intentionally designed to encourage compulsive engagement, particularly among younger users.

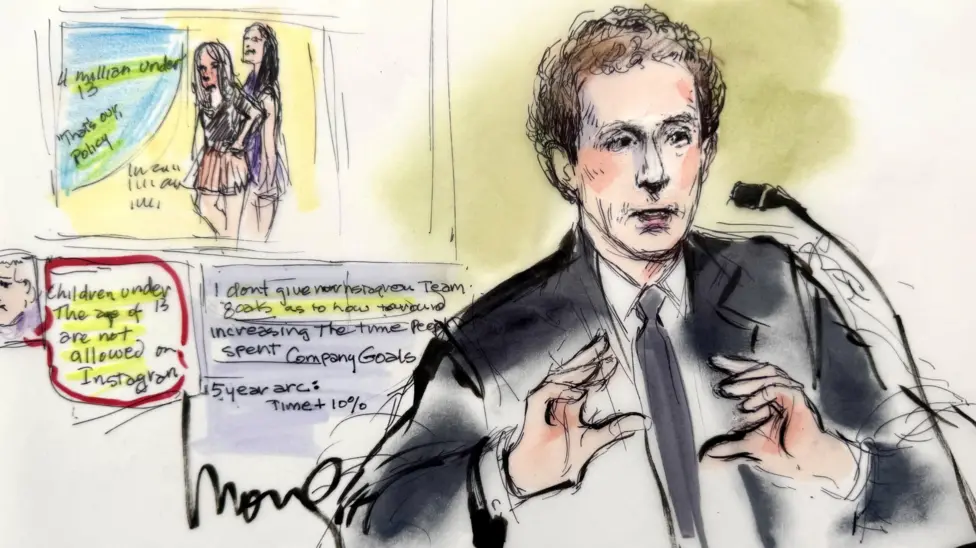

Courtroom Sketch of Mark Zuckerberg's testimony, REUTERS/Mona Edwards

Kaley told the jury that she began using YouTube at the age of six and Instagram at nine. According to her testimony, social media quickly became a constant presence in her life: she would check notifications throughout the night, spend hours scrolling content, and measure her self-worth through the number of “likes” received on posts. By her early teenage years she had begun experiencing anxiety and depression and later received a diagnosis of body dysmorphia. Her lawyers argue that the design of these platforms - including autoplay video, algorithmic recommendations, filters, and infinite scrolling - created an environment that encouraged addictive behaviour and exacerbated underlying psychological vulnerabilities.

As might be expected, the defence rejects this characterisation. Executives from Meta have argued that while excessive use of social media may be “problematic”, it does not constitute addiction in a medical or legal sense. The companies’ case instead focuses on alternative explanations for Kaley’s mental health difficulties, including family circumstances and broader environmental factors. The trial therefore centres on familiar questions of causation: whether the claimant’s injuries can properly be attributed to the design of the platforms themselves.

The litigation forms part of a much larger wave of claims currently progressing through the United States courts. Thousands of similar cases have been filed by families alleging that social media platforms contributed to serious mental health harms among young users, including depression, self-harm and suicide. Kaley’s claim is widely regarded as a bellwether case, meaning its outcome may shape the trajectory of the broader litigation.

Beyond Section 230: Holding Platforms Accountable for Design

The legal strategy adopted by the claimants reflects an attempt to navigate one of the most formidable barriers to platform liability in American law: Section 230 of the Communications Decency Act.

Section 230, enacted in 1996, provides that online platforms cannot generally be treated as the “publisher or speaker” of user-generated content. The provision effectively created a broad safe harbour that allowed internet platforms to host content produced by users without incurring the same legal liabilities faced by traditional publishers.

The scope of this immunity has been repeatedly confirmed by the courts. For example, in Zeran v America Online the court held that AOL could not be held liable for defamatory posts made by third parties on its platform, even after being notified of the content. The decision established an expansive interpretation of Section 230 that has shaped internet law for decades.

Such immunity was widely regarded as essential to the development of the modern internet. Without it, platforms would have faced overwhelming legal exposure for the vast quantity of user-generated content posted every day. Faced with the threat of constant litigation, early internet services would likely have adopted aggressive moderation practices or avoided hosting large-scale forums altogether. In that sense, Section 230 played a crucial role in enabling the participatory culture that characterised the early internet. But the internet of 1996 is not the internet of today.

It is worth noting that the immunity under section 230 is not absolute. In Fair Housing Council v Roommates.com the court held that a platform could not rely on Section 230 where it materially contributed to the creation of unlawful content. Roommates.com required users to disclose protected characteristics such as race and sexual orientation and then used that information to match potential roommates. Because the platform itself had structured the discriminatory content, the court held that the Section 230 defence did not apply.

The claim in KGM v Meta et al. seeks to push this boundary further. Rather than alleging that platforms failed to remove harmful content, the claim focuses on the design of the platforms themselves. In this framing, the relevant “product” is not the content shared by users but the system of algorithms, notifications and interface features that structure the user experience.

This shift from content to design reflects a broader evolution in the debate around technology regulation. For much of the early history of the internet, platforms were understood primarily as neutral intermediaries facilitating communication between users. In recent years, however, scholars and policymakers have increasingly emphasised the extent to which platform architecture shapes behaviour. Features such as infinite scrolling, push notifications and personalised recommendation systems are not simply neutral tools but mechanisms designed to maximise engagement and retention.

Medicalised Likes

Critics argue that these systems exploit well-documented psychological mechanisms, particularly among younger users. Variable reward structures - where users receive unpredictable bursts of social validation through likes, comments or new content - have frequently been compared to the reward schedules used in gambling machines. From this perspective, the claim that social media platforms are engineered to encourage compulsive behaviour is not merely rhetorical but grounded in established behavioural research.

The debate surrounding the trial therefore extends beyond the specific facts of Kaley’s claim. It touches on a wider cultural shift in attitudes toward social media, particularly in relation to children and adolescents. Over the past decade, rising levels of reported anxiety, depression and self-harm among young people have prompted growing scrutiny of the role played by digital environments. Governments, academics and parents have increasingly questioned whether platforms designed primarily to maximise user engagement are compatible with the developmental needs of younger users.

Technology companies have generally resisted the characterisation of their products as inherently harmful. They argue that social media platforms provide valuable tools for communication, creativity and community building, and that the relationship between social media use and mental health outcomes remains complex and contested. Many researchers likewise caution against drawing simple causal conclusions from correlations between social media use and psychological distress.

Why It Matters: Legal, Cultural, and Global Implications

Translating these competing narratives into workable doctrines of liability presents considerable difficulty. Tort law traditionally requires claimants to demonstrate a clear causal connection between the defendant’s conduct and the harm suffered. In cases involving mental health outcomes shaped by a wide range of social and personal factors, establishing such causation can be particularly challenging.

The defence’s reliance on the “but for” test reflects this difficulty: if the harm might plausibly have arisen even without the claimant’s exposure to social media, liability becomes difficult to establish.

Yet the case also illustrates a broader trend in modern litigation: the attempt to adapt existing legal frameworks to emerging forms of technological harm. Previous generations of mass litigation - most notably the tobacco and opioid cases - similarly revolved around allegations that companies knowingly created products with addictive properties while minimising or concealing the associated risks. Whether social media platforms ultimately come to be viewed in a comparable light remains an open question.

Although the litigation is unfolding in the United States, the issues it raises resonate internationally. In the United Kingdom, concerns about the potential harms of social media - particularly for children - have increasingly been addressed through regulatory rather than judicial mechanisms. The Online Safety Act 2023, enforced by Ofcom, imposes duties on platforms to assess and mitigate risks to users, including harms affecting children. While the American courts are now being asked to determine whether social media companies can be held liable in tort for “addictive design”, the UK has largely approached the issue through a framework of statutory oversight.

The United States has traditionally adopted a comparatively laissez-faire approach to technology regulation. Given the market dominance of US technology companies, moves towards imposing substantial liability on platforms for the behavioural consequences of their design choices could have an international impact. It is not unlikely that technology companies, whose executives maintain complex relationships with political actors in Washington, will lobby for expanded statutory protections should the courts prove willing to entertain such claims.

Whether KGM v Meta et al. marks the beginning of a new phase of platform accountability or simply the outer limit of tort law’s reach into the digital sphere remains to be seen. What is clear, however, is that the architecture of online platforms - once regarded as a purely technical matter – is no longer safe from legal scrutiny.

“The Internet used to be a Place”

In this wonderful video essay, media critic Sarah Davis Baker reflects on how the early internet functioned less as an endless stream of content and more as a series of discrete spaces. Users would enter websites much as they might enter rooms: “we moved through rooms, we crossed thresholds, we arrived… and we left.” Access often took place through a shared family computer, and online activity was bounded by both time and physical location. That was the internet as I first encountered it. Growing up through the 2010s, I watched as that architecture largely disappeared.

Contemporary social media platforms are structured not as spaces to visit but as environments designed to retain attention. Infinite scrolling feeds, algorithmic recommendations and constant notifications ensure that the user rarely encounters a natural stopping point. The experience is no longer one of entering and leaving discrete digital spaces, but of remaining within a continuous stream of personalised content.

The transformation is not merely aesthetic; it has profound legal implications. The regulatory assumptions that underpinned early internet law - that platforms function primarily as neutral hosts for user speech - sit uneasily with platforms whose core business model relies upon actively shaping what users see and how long they remain engaged. As the architecture of the internet has evolved, so too has the question of whether the legal frameworks developed in the 1990s remain fit for purpose.